Nwz // Shutterstock

Demystifying structured data: How to speak an LLM’s native language

Large language models (LLMs) have fundamentally changed what it means to be found online. These systems do not read content the way a person does, nor do they rank pages the way traditional search engines do.

Instead, they parse meaning, identify relationships, and construct answers from structured patterns. When these patterns are missing, the model is forced to guess, and in the era of AI search, a guess is often the difference between being cited as a source and being skipped entirely.

Schema.org markup removes this ambiguity, providing a machine-readable layer of certainty beneath human-written text. As LLMs become the primary interface between brands and audiences, providing this “native language” is among the most consequential strategic decisions a digital organization can make. Below, elk Marketing explains how structured data helps AI systems interpret and surface content online.

The Shift From Keywords to Entities Starts With Schema

For most of the internet’s history, search operated on a straightforward transaction: match a user’s words to a page that contained those same words. That logic hasn’t disappeared, but AI has added a more sophisticated layer on top of it.

Today, systems like Google and LLMs don’t stop at matching phrases. They search for entities, specific, identifiable things like people, organizations, products, places, and concepts that carry meaning regardless of how they’re phrased.

Entity optimization builds on traditional SEO rather than replacing it. Where keyword optimization focuses on what words appear on a page, entity optimization establishes what something definitively is, so AI systems can recognize it, trust it, and connect it to a broader network of known relationships.

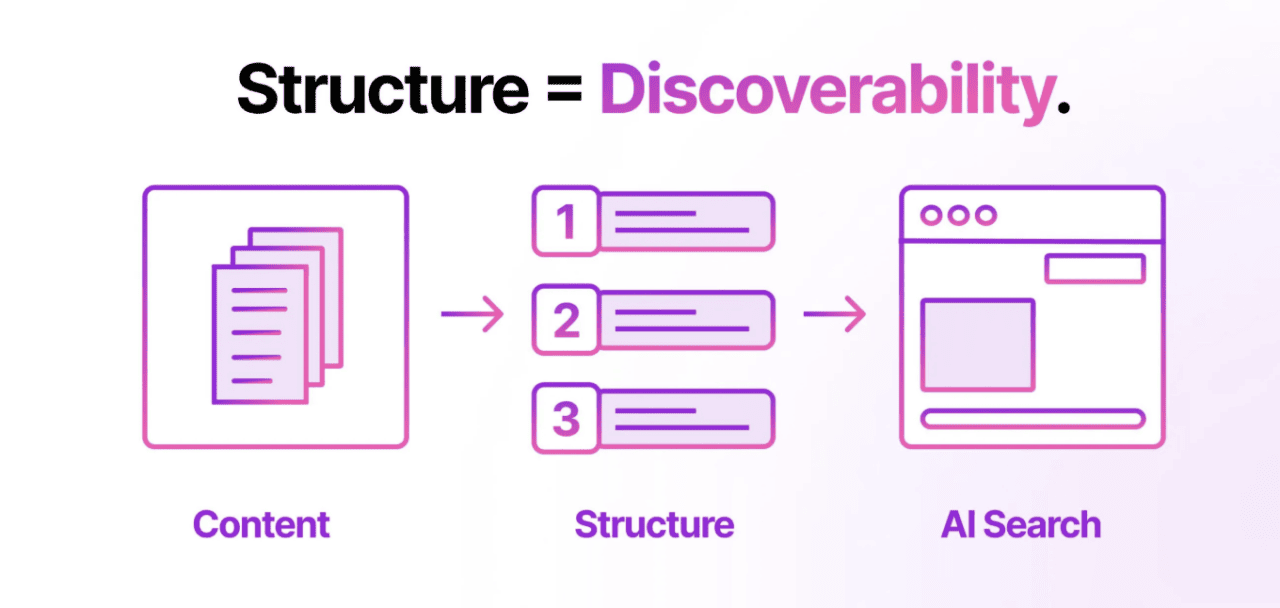

The future of search visibility isn’t about whether a page mentions a topic; it’s about whether a system can confidently identify the entities behind that topic. Structured data provides that certainty, transforming content from descriptive text into a set of verifiable relationships that AI systems can reliably cite.

Schema.org is the mechanism that makes this possible, founded by Google, Bing, Yahoo, and Yandex as a shared vocabulary that translates human content into machine-confirmed meaning. That confirmation strengthens semantic relevance, improves recognition across knowledge graphs, and ultimately determines whether a brand earns a citation or gets bypassed entirely.

elk Marketing

How Structured Data Feeds the Knowledge Graph

Knowledge graphs are how AI systems and search engines store what they know. Google launched its own in 2012, and it now holds hundreds of billions of facts about entities and the relationships connecting them.

When a user submits a query, Google doesn’t just scan web pages. It cross-references what it already knows, pulling established relationships from that graph to deliver answers with confidence rather than approximation.

Structured data is what keeps that graph accurate and current. When a business implements schema markup, it makes entity relationships explicit, confirming not just that an organization exists, but what it does, who leads it, where it operates, and how it connects to other verified entities.

That contextual trust is what earns recognition across AI systems, and it sets the stage for how specific schema types do the work at the ground level.

The Schema Types That Power Machine Readability

However, not all structured data carries equal weight, and the schema types that matter most to AI systems are the ones that remove interpretive guesswork at the most critical points of a page.

Organization schema anchors a brand’s identity, establishing who a company is at the entity level before any content gets evaluated. Article schema confirms authorship, publication date, and content classification, the signals that determine whether a piece is citation-worthy or simply crawlable.

Product schema defines pricing, attributes, and availability explicitly, so machines work from confirmed facts rather than approximations. FAQPage schema delivers direct answers in a format that AI-generated responses were practically built to consume.

HowTo schema converts sequential instructions into structured, extractable steps. Together, these types form a machine-readable architecture beneath the content a human audience reads, and that architecture is what LLMs reach for first when constructing an answer.

elk Marketing

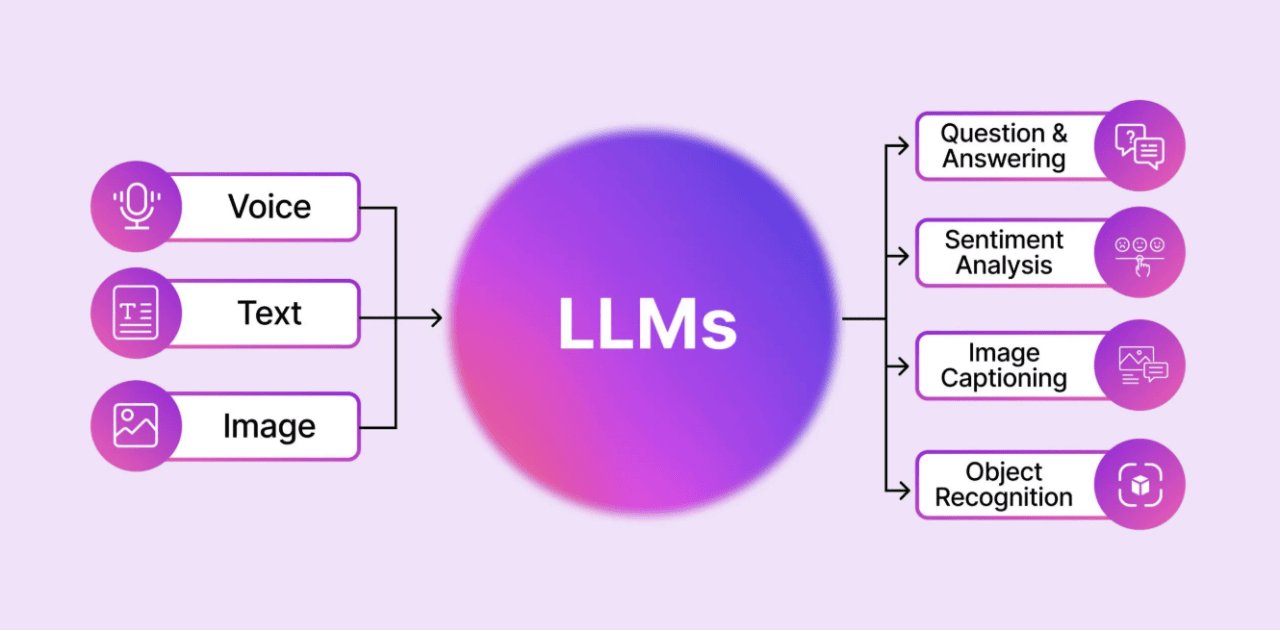

How LLMs Interpret Structured Information

While LLMs possess an uncanny ability to process natural language, their effectiveness is often hindered by a “structural blind spot.” Industry observers have consistently noted that even the most advanced models frequently misinterpret meaning when forced to flatten complex data into linear sequences.

Structured data resolves this by acting as a “ground truth” layer that eliminates probabilistic guesswork. By labeling entities and relationships, schema functions as a critical efficiency tool rather than a mere ranking tactic. It supports entity recognition by providing explicit anchors for subjects, which sharpens contextual understanding beyond surface text.

This precision enables direct answer extraction, allowing AI to generate concise summaries without the risk of “hallucination” — the tendency for models to fabricate facts when data is ambiguous.

Ultimately, this structured framework provides computational efficiency, reducing the processing load on AI systems by offering a machine-readable map that ensures faster parsing and higher citation accuracy.

Organizational Adoption: Turning Structured Data Into Scalable Infrastructure

Achieving machine-readable precision at scale requires moving beyond manual tagging toward robust, automated infrastructure. Strategic implementation separates volatile data from evergreen assets to prevent “schema drift.”

Dynamic schemas are essential for product detail pages (PDPs) and listing pages (PLPs), where real-time JSON-LD updates ensure AI systems ingest current pricing and availability. Conversely, static schemas anchor core brand identity and instructional how-to guides.

This transition demands cross-functional alignment between engineering, SEO, and content teams to integrate structured data into the core CMS architecture. By treating schema as a living asset rather than a technical afterthought, organizations ensure their digital identity remains accurate across thousands of pages.

Such scalable automation provides the foundational clarity necessary for AI models to verify authority without manual intervention.

The Future Belongs to What Machines Can Read

As AI search continues to reduce reliance on unstructured interpretation, the organizations paying closest attention are no longer asking whether structured data matters. They already know it does. That recognition has shifted schema from a technical consideration into foundational digital infrastructure, the kind that compounds in value the longer it stays in place.

Machine readability is now the baseline requirement for discoverability, and the systems that determine who gets cited are increasingly solving for certainty over volume. OpenAI Chief Product Officer Kevin Weil has noted that as real-time information floods the web, these structures allow AI to improve its answers and function as a better assistant.

Speaking the native language of an LLM is about reducing ambiguity to enable precision at scale, and businesses that build that clarity now are the ones AI systems will keep reaching for.

This story was produced by elk Marketing and reviewed and distributed by Stacker.

![]()